Twelve employees. One billion dollars in startup funding. That is the kind of math that usually signals a gold rush mentality. But the founder of AMI Labs, Yann LeCun, is not chasing the same vein as everyone else. The former chief AI scientist at Meta walked away from big tech late last year to build something deliberately smaller. He thinks the technology we currently call AI, the sprawling large language models that power chatbots and code generators, is fundamentally the wrong path for meaningful, long-term progress.

LeCun is not betting against AI. He is betting against the architecture that dominates it. His new venture, Advanced Machine Intelligence Labs, is positioning itself as a pure research organization. The goal is not a product launch next quarter or even next year. LeCun has openly stated that AMI Labs might not produce something saleable for half a decade. In an industry obsessed with shipping fast, that kind of patience is either visionary or naive. The market seems to lean toward visionary, given the nine-figure check investors wrote.

Why Modular AI Could Outperform the Giant Black Box

The core of LeCun’s argument is elegant in its simplicity. Large language models are generalists. They are trained on a firehose of internet text, then tuned to produce plausible sounding answers for almost any prompt. That breadth comes at a staggering cost. Training and running these models requires fleets of GPUs, immense data centers, and electricity bills that would make a small country wince. The recursive prompting needed for reasoning models only adds to the expense.

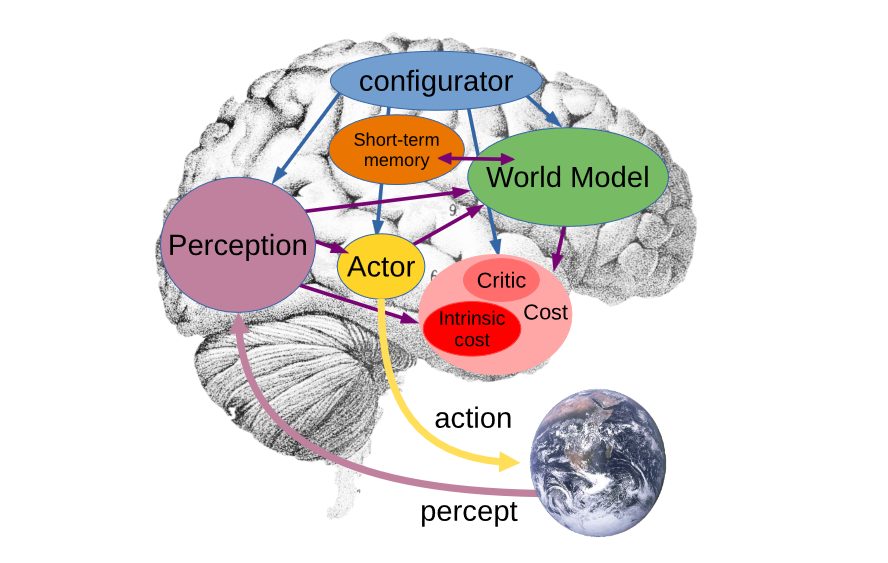

AMI Labs proposes a different blueprint. Instead of one monolithic model, their approach uses a collection of modular components. Each piece is specialized, trained for a specific domain or role. Think of it less like a single brain and more like a team of experts in a room. One module handles perception, processing video, audio, or text data using techniques like deep learning vision algorithms. Another acts as a world model, encoding the rules and context of the AI’s operating environment. An actor module proposes the next action based on reinforcement learning. A critic module evaluates those proposals against hard-coded rules and short-term memory. Finally, a configurator orchestrates how information flows between all these parts.

This is not a radical fantasy. It is a proven approach, at least in constrained settings. Machine learning systems have long taught themselves to master video games or board games using similar modular logic. The difference is that LeCun wants to generalize the architecture for real world applications. If it works, the implications are profound.

Smaller Models, Cheaper Inference, Local Execution

One of the most attractive promises of modular AI is the cost structure. Current large language models like ChatGPT operate on hundreds of billions of parameters. A specialized model that does not need to be a generalist could function effectively with only a few hundred million parameters. That is a reduction of three orders of magnitude. Running such a model would require a fraction of the GPU power. In some cases, it could run entirely on device, on a laptop or even a phone.

This changes the economic calculus. Right now, only the largest technology enterprises can afford to run frontier models at a financial loss. Anthropic, Meta, OpenAI, and Google are locked in an arms race of scale. LeCun is proposing a quiet revolution in efficiency. If computing costs continue to fall, as they historically have, the barrier to entry for high quality AI could drop dramatically. That would make local, cheap, and inherently more accurate systems not just possible but practical.

Directed Data Versus the Internet Firehose

A second critical difference lies in training data. Large language models ingest everything they can scrape from the public internet. This gives them broad knowledge but also deep flaws. They hallucinate, they inherit bias, and they have no way of verifying the truth of the information they were trained on. A modular system flips that approach. Each instance of LeCun’s AI would receive directed data relevant only to its environment and purpose.

The critic module, for example, would be more comprehensive in applications handling sensitive information, like medical records or financial compliance. The perception module would take priority in systems that need to react to real world events quickly, such as autonomous robotics or industrial monitoring. All modules are trained in ways specific to the AI’s field. This targeted learning reduces noise and improves reliability. It is the difference between asking a librarian who has read every book in existence to help you with a plumbing problem and calling an actual plumber.

There is a philosophical divide here. Large language models respond based on statistical likelihood, generating best guess answers that then get polished via prompt engineering or reasoning wrappers like Claude Code. That works for chat, but it is not how you build a safe surgical assistant or a factory automation system. LeCun believes the large model approach has hit a ceiling. It cannot improve enough, he argues, to realize the aspirational claims made by its creators.

The Investor Bet: Patience Over Hype

A startup with a contrarian thesis and enormous financial backing is not unusual in tech history. But AMI Labs is asking for something rare in the current climate: patience. The billion dollar valuation is not based on revenue or user growth. It is based on a belief that the architecture of tomorrow will look nothing like the architecture of today. Investors are essentially buying an option on a different AI paradigm.

If LeCun is right, the payoff is massive. The industry could shift from a centralized model of compute giants renting out access to a distributed landscape of affordable, specialized, and trustworthy AI agents running on everyday hardware. If he is wrong, the billion dollars becomes an expensive lesson in hubris. Either way, the bet is a reminder that the future of artificial intelligence is not settled. The big models may dominate the headlines, but the most interesting experiments are happening far from the spotlight, in a small lab with a dozen people and a very different idea.

The question now is not whether AI will get smarter. It is about what smart really means. A generalist that knows a little about everything or a specialist that gets one thing exactly right. The industry may soon have to choose.