A Security Patch That Missed the Mark

A significant security vulnerability has been uncovered within Lovable, a popular platform for building applications with artificial intelligence. The flaw, which reportedly remains unpatched for a vast swath of user projects, exposes sensitive data including proprietary source code, database credentials, and private user information. This incident serves as a stark lesson in the perils of inconsistent security implementations, especially for platforms handling critical development assets.

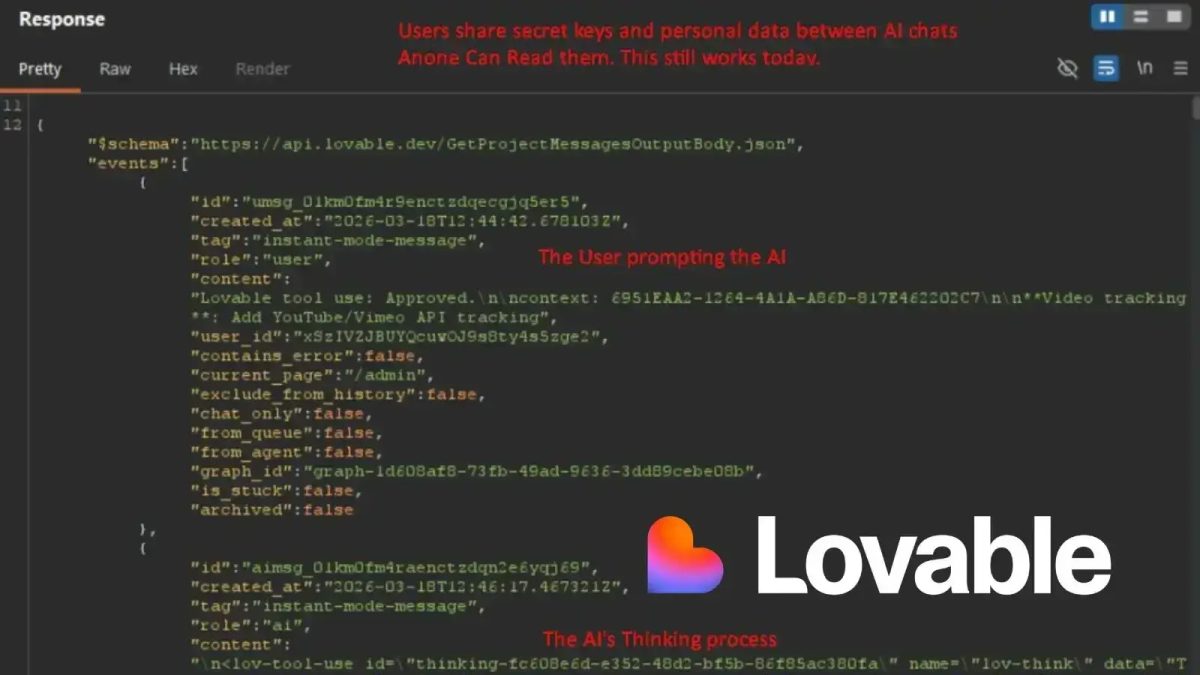

How a Simple API Check Failed

At the heart of this security debacle is an inconsistent authorization check in Lovable’s application programming interface (API). According to a public disclosure by security researcher known as @weezerOSINT on X, the platform appears to have corrected the flaw for projects created after a certain point. For these newer projects, unauthorized access attempts correctly trigger a “403 Forbidden” response, effectively locking the digital door.

The critical failure, however, lies in the treatment of legacy work. For any project created before November 2025, the same unauthorized request returns a “200 OK” status. This is the web’s equivalent of a green light and an open invitation, granting full access to the project’s underlying data. It’s a classic case of fixing the front gate but leaving the back window wide open for anything built before last season.

The Alarming Scope of Exposed Data

The data exposed by this API misconfiguration is far from trivial. Researchers demonstrated that a free account could access another user’s complete project source code, administrative panels, and infrastructure secrets like database login credentials. Imagine a competitor or malicious actor not just seeing your finished app, but walking through your entire workshop, blueprints, and security system codes.

Perhaps more insidiously, the flaw also exposes full AI conversation histories. These logs often contain detailed technical dialogues between developers and the AI assistant, discussing database schemas, backend logic, and implementation strategies. In one cited example, access to a Danish nonprofit’s admin panel revealed chat logs that laid bare user data structures, including names and email addresses.

Legacy Projects Left in the Lurch

What makes this vulnerability particularly concerning is its blanket effect on older projects, regardless of their current activity. The researcher provided a telling example: a project that had been actively edited and updated just ten days prior was still completely exposed due solely to its original creation date. This creates a dangerous scenario where developers believe their actively maintained work is secure, while in reality, its foundational data is wide open.

The impact extends beyond individual hobbyists. The disclosure notes that employees from major tech firms including Nvidia, Microsoft, Uber, and Spotify have accounts on Lovable. If any internal tools, prototypes, or experiments were built on the platform before the November 2025 cutoff, that proprietary code and any embedded credentials could now be sitting in plain sight. The potential for corporate espionage or credential theft is significant.

A Delayed Response and a Systemic Problem

Alarmingly, the vulnerability was reportedly reported to Lovable 48 days before its public disclosure, yet a comprehensive fix for affected legacy projects remains elusive. The issue was apparently marked as a duplicate internally, a bureaucratic misstep that left thousands of projects vulnerable for weeks. This delay highlights a common tension in fast-moving tech: the race to patch new issues versus the arduous task of retroactively securing old ones.

The situation underscores a critical principle in platform security: consistency is key. A security patch that only protects new creations while abandoning the old is like a vaccine that only works on people born after Tuesday. It addresses the symptom for a subset of users but fails to cure the underlying disease across the entire ecosystem. For developers, this incident is a cautionary tale about the hidden risks of low-code and AI-powered platforms, where you ultimately cede control over fundamental security layers.

Moving Forward: Trust and Verification

For the broader tech community, the Lovable incident is a reminder to scrutinize the security posture of any platform hosting intellectual property. It raises pointed questions. How many other platforms have similar inconsistent security models lurking in their legacy systems? When a vendor says a vulnerability is “fixed,” does that fix apply to your existing data, or just to new data moving forward?

The path forward for Lovable and platforms like it involves not just a technical patch, but a restoration of trust. A transparent remediation plan for all affected users, clear communication on the fix’s scope, and perhaps third-party security audits will be necessary. For developers, the takeaway is to always assume that your tools could become a vector for exposure. Diversifying secrets, regularly auditing permissions, and understanding the shared responsibility model of cloud platforms are no longer best practices; they are essential survival skills in a world where your AI assistant’s memory might just be someone else’s open book.